Nearly two decades after leaving office, the former PM is still trumpeting the same futile militarism and failed free market dogmas. The question naturally arises: why does anyone still listen to him, says ANDREW MURRAY

When a computer program can outperform your human doctor, should you switch, ask ROX MIDDLETON, LIAM SHAW and MIRIAM GAUNTLETT

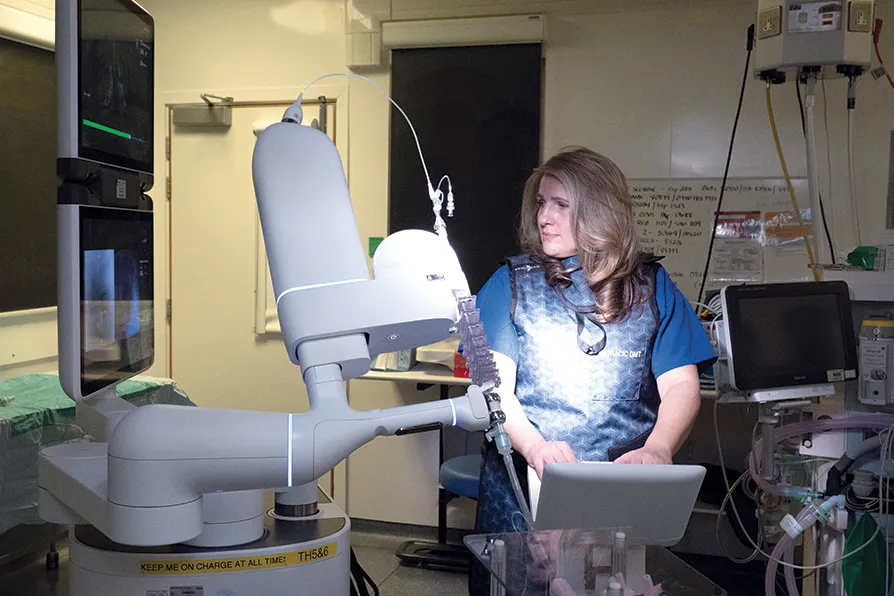

WHEN SPEED IS OF THE ESSENCE: Stephanie Fraser, a thoracic surgeon, demonstrates AI and robot technology used by NHS England speed up lung cancer diagnosis under the new NHS pilot, at Guy's and St Thomas' Hospital, London, January 2026

WHEN SPEED IS OF THE ESSENCE: Stephanie Fraser, a thoracic surgeon, demonstrates AI and robot technology used by NHS England speed up lung cancer diagnosis under the new NHS pilot, at Guy's and St Thomas' Hospital, London, January 2026

IN THE 1970s, a PhD student called Edward Shortliffe wrote a computer program to try to help doctors prescribe antibiotics. He wanted the program to be able to take the same information that a doctor had about their patient and guess the bacteria causing the infection, helping to decide which antibiotic to prescribe.

The main difficulty with this idea was that the information doctors had was always uncertain and imperfect.

It was easy to see how algorithms might work for a nice simple flowchart of Yes and No, but less obvious how to write one that captured how doctors thought through their choices in this uncertain situation.

Ideally he would use probabilities based on data — 70 per cent of the time do this, 30 per cent that — but often the data seemed too poor to work those out properly. Yet Shortliffe saw that doctors nevertheless had implicit judgements about these probabilities.

Being a good doctor was about the art of good guessing. He wanted to reproduce that “inexact reasoning” and build the rules the doctors were implicitly using into a computer as an early form of AI.

The program he wrote was called MYCIN (because lots of antibiotics, like streptomycin, end in that suffix). It was made up of a set of rules based on probabilities.

These probabilities weren’t calculated using statistics, but from what doctors told him: in their experience, they might say, it was 70 per cent likely that infection A needed antibiotic B.

Shortliffe couldn’t test these probabilities — but he didn’t need to, because he was just trying to replicate how doctors thought, not what the “real” probabilities were.

The tricky part was plugging all the rules together. He was inspired by a contemporary AI program where researchers had encoded information about South American geography into a network of rules, allowing their program to carry out a passable text conversation with a user.

But medicine wasn’t like South American geography: “We found it challenging to transfer a page or two from a medical textbook into a network of sufficient richness for our purposes.”

MYCIN therefore kept all the rules in play, allowing multiple hypotheses to be considered as possibly true with a certain percentage, even if only one of them was eventually used as the basis for treatment. This was probably similar to how doctors were thinking: in medicine nothing is ever ruled out, however unlikely.

MYCIN performed well at replicating doctors’ decisions but it was never used to treat patients. However, by proving that it was possible to encode doctors’ thinking into a set of rules, it inspired future researchers.

Formal tests of diagnostic reasoning, where doctors are given standardised information and then scored on their responses, paved the way for a more rules-based approach to medicine.

Now, 50 years later, research published in the journal Science has shown that a new generation of AI systems can seemingly outperform doctors in standard tests and vignettes of real clinical cases.

The researchers tested “reasoning models” built by OpenAI. These are large language models (LLMs) that work through a problem step-by-step before responding to a prompt, in a way that is meant to mirror the procedural way humans often think through problems.

Unlike MYCIN, these models are more flexible: they don’t have a set of hard-coded rules about medicine, but just respond to prompts based on the huge amounts of text they’ve been trained on.

In a range of tests the models, on average, did better than real doctors. For example, when tested on triaging 76 emergency department cases, with the hospital records passed in as unprocessed text, one of the models achieved exact or very-close diagnostic accuracy in 51 cases. That was a lot better than two expert doctors, who succeeded in only 41 and 42. Other results are similarly impressive.

This study is a powerful sign that the impact of AI in healthcare could be dramatic. The prevailing idea in healthcare right now is that doctors will routinely collaborate with AI. But as two other researchers commenting on the work put it, the study raises the question of whether, for certain tasks, AI could do better working independently.

Doctors, nurses and other humans are needed for lots of aspects of healthcare, but perhaps diagnoses should often be left to AI.

That brings challenges. It’s helpful to compare the situation to autopilots in planes. Autopilots consistently outperform human pilots in routine conditions, but human pilots still exist because in emergencies and unpredictable situations, we prize human judgement.

However, pilots know that judgement and experience are based on past experience and mistakes. Without deliberate practice, skills atrophy — which is why pilots take over from the autopilot for tasks like landing, even though the autopilot would do it just fine routinely.

Integrating AI into healthcare poses similar risks. The knowledge that is passed to doctors in their training, however imperfect, has been handed on through generations. The cognitive offloading through the use of AI threatens that culture.

As computers have become more powerful over the 20th century, doctors have been encouraged to think like computers with standardised, logical routines. But the brain is not a computer, and a doctor is not a robot.

There is a real danger that AI could improve the average standard of treatment while reducing doctors’ abilities, thus reducing the very best care, particularly in emergencies.

The gut feeling that might make a particular doctor do something differently for the particular patient in front of them, without being able to articulate exactly why, becomes an increasingly risky basis for a decision.

It’s nice to believe that AI models could automatically improve our health by catching doctors’ mistakes without any downsides. But we don’t live in an ideal world. There will certainly be issues with real-world implementation that can’t be captured by standardised tests.

An emphasis on “correctness” may also threaten more recent developments in medicine, where patients are encouraged to discuss their own diagnosis and to share their ideas, rather than blindly accepting what the doctor tells them.

This movement towards meaningful collaboration in medicine could be threatened by a technology that could mean that the doctor can be “right” more of the time. Not every patient needs a single, strict diagnosis.

Diagnosis should be a means to an end, and doctors should be trying to collaborate with the patient on the best care for them, not simply to get the highest mark in an exam.

Another major concern is that although current models are free or cheap to use, the future business plan of many major AI companies depends on locking customers into expensive long-term contracts.

The current enthusiasm plays into the companies’ hands. Once the models become supposedly indispensable for healthcare, hospitals may be unable to transition away from them as costs dramatically rise. Health inequality may soar.

This new research shows that ignoring AI in healthcare isn’t an option. Ruthless examination of the costs and benefits of these models, and the development of alternatives that don’t rely on major tech companies, is now one of medicine’s biggest challenges.